ESP32-S3 for Edge AI | SIMD Vector Instructions Boost Dot Product Performance

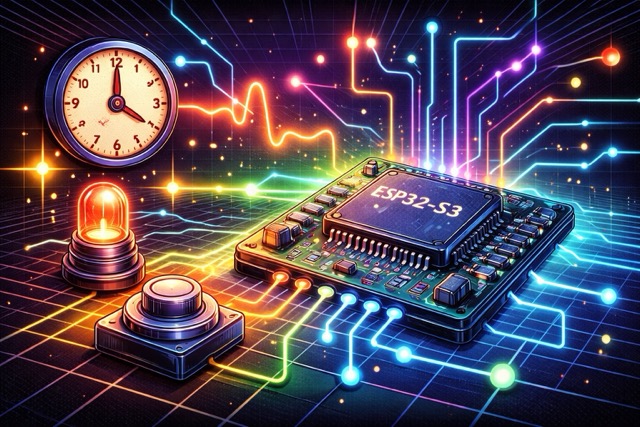

In the midst of the sweeping AI wave, are you curious about running AI applications on edge devices? Imagine your IoT device no longer just passively receiving commands, but having basic “thinking” abilities to process sensor data in real-time and make decisions. This is the charm of Edge AI. However, executing complex AI computations on resource-limited microcontrollers can often be a major challenge.

Today, we will delve into a powerful solution: leveraging the built-in SIMD vector instruction set of the ESP32-S3 to significantly boost the computing speed of Edge AI applications. I will demonstrate how to accelerate dot product operations using SIMD instructions on the ESP32-S3, and visually present the performance difference with logs.

Contents

What is SIMD?

SIMD (Single Instruction, Multiple Data) is a CPU instruction set technology that allows a single instruction to process multiple data elements simultaneously.

- Normal CPU: Performs one multiplication + addition at a time.

- SIMD CPU: Can compute multiple elements (e.g., 4-8) at once.

This is crucial for AI/ML computations because convolutional and fully connected layers rely heavily on a large number of dot product and matrix operations. With SIMD, the CPU pipeline can be fully utilized, and the overall computation speed is greatly enhanced.

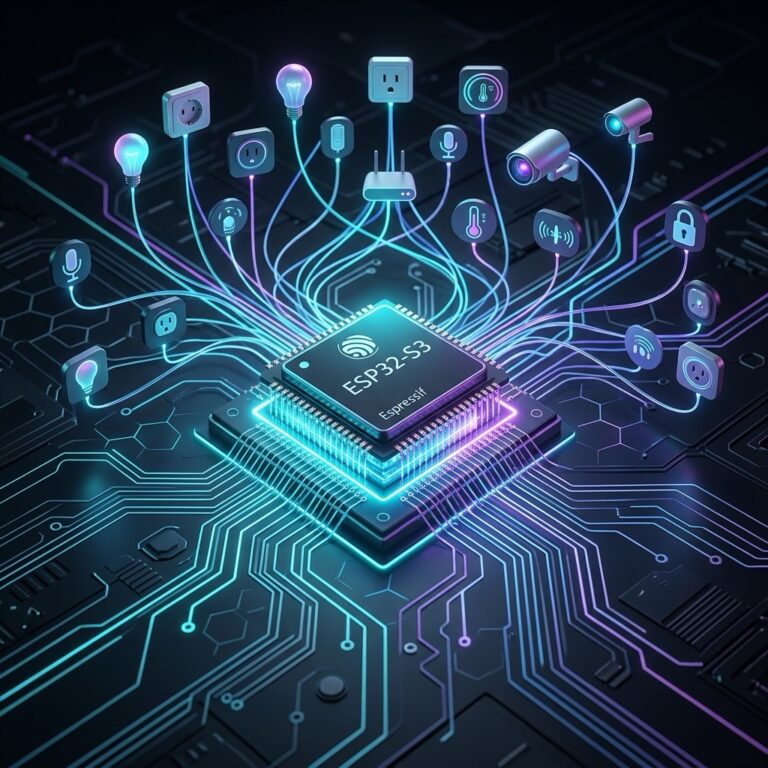

What is ESP32-S3?

The ESP32-S3 is a new generation of MCU launched by Espressif, featuring:

- Xtensa LX7 dual-core CPU

- SIMD vector instruction support (for AI/ML acceleration)

- Wi-Fi and Bluetooth LE support, ideal for Edge AIoT devices

- Can run AI frameworks like ESP-DL / ESP-SR / TensorFlow Lite Micro

Why Choose ESP32-S3?

- Built-in SIMD Vector Instruction Set: This is the most important reason. Compared to other microcontrollers without SIMD capabilities, the ESP32-S3 can achieve a performance boost of several to dozens of times when performing vectorized operations.

- Abundant Hardware Resources: Equipped with a dual-core processor, ample SRAM (up to 512 KB), and Flash, it is sufficient for many small-to-medium Edge AI models.

- Complete Software Ecosystem: ESP-IDF (Espressif IoT Development Framework) provides a comprehensive development toolchain and a rich set of libraries, supporting several mainstream machine learning frameworks such as TensorFlow Lite for Microcontrollers.

- High Cost-Effectiveness: Compared to some high-priced chips designed specifically for AI, the ESP32-S3 is affordable, making it perfect for learning and development.

Development Environment

Before starting your programming, make sure to complete the following preparations:

- Install ESP-IDF (version 5.x or higher): ESP-IDF is the official development framework for programming the ESP32, and it supports multiple operating systems such as Windows, macOS, and Linux.

- ESP32-S3 Development Board: An ESP32-S3 board is required.

Project Structure

Assuming you create a simd_dotproduct project, the directory structure is as follows:

simd_dotproduct/

├── CMakeLists.txt

├── main

│ ├── CMakeLists.txt

│ └── main.c

└── sdkconfig.defaultsmain/main.c: This is where we will write our main code.CMakeLists.txt: Configures the project’s build rules.sdkconfig.defaults: Sets default compilation options.

Code

The following example demonstrates a comparison between the scalar (normal CPU) loop and the SIMD dot product, using logs to show the time difference:

#include <stdio.h>

#include <stdlib.h>

#include <math.h> // Include for fabsf function

#include "freertos/FreeRTOS.h"

#include "freertos/task.h"

#include "esp_timer.h"

#include "esp_dsp.h"

#include "esp_log.h"

#define TAG "SIMD_BENCHMARK"

#define DATA_SIZE 1024

#define ITERATIONS 1000

// Scalar dot product

float dot_product_scalar(const float* a, const float* b, int len) {

float sum = 0.0f;

for (int i = 0; i < len; i++) {

sum += a[i] * b[i];

}

return sum;

}

// SIMD dot product

float dot_product_simd(const float* a, const float* b, int len) {

float result;

dsps_dotprod_f32(a, b, &result, len);

return result;

}

void app_main(void) {

ESP_LOGI(TAG, "Starting SIMD performance test...");

// Initialize DSP library

esp_err_t ret = dsps_fft2r_init_fc32(NULL, CONFIG_DSP_MAX_FFT_SIZE);

if (ret != ESP_OK) {

ESP_LOGE(TAG, "DSP library initialization failed");

return;

}

// Allocate and initialize test data

float* array_a = (float*)malloc(DATA_SIZE * sizeof(float));

float* array_b = (float*)malloc(DATA_SIZE * sizeof(float));

if (array_a == NULL || array_b == NULL) {

ESP_LOGE(TAG, "Memory allocation failed");

return;

}

// Use fixed seed for reproducibility

srand(42);

for (int i = 0; i < DATA_SIZE; i++) {

array_a[i] = (float)rand() / RAND_MAX;

array_b[i] = (float)rand() / RAND_MAX;

}

// Test scalar version

uint64_t start_scalar = esp_timer_get_time();

float scalar_result = 0;

for (int i = 0; i < ITERATIONS; i++) {

scalar_result = dot_product_scalar(array_a, array_b, DATA_SIZE);

}

uint64_t end_scalar = esp_timer_get_time();

// Test SIMD version

uint64_t start_simd = esp_timer_get_time();

float simd_result = 0;

for (int i = 0; i < ITERATIONS; i++) {

simd_result = dot_product_simd(array_a, array_b, DATA_SIZE);

}

uint64_t end_simd = esp_timer_get_time();

// Calculate performance metrics

double scalar_time = (end_scalar - start_scalar) / 1000.0;

double simd_time = (end_simd - start_simd) / 1000.0;

double speedup = scalar_time / simd_time;

// Output results

ESP_LOGI(TAG, "====== Performance Test Results ==========");

ESP_LOGI(TAG, "Data length: %d, Iterations: %d", DATA_SIZE, ITERATIONS);

ESP_LOGI(TAG, "Scalar result: %.6f", scalar_result);

ESP_LOGI(TAG, "SIMD result: %.6f", simd_result);

ESP_LOGI(TAG, "Scalar time: %.2f ms", scalar_time);

ESP_LOGI(TAG, "SIMD time: %.2f ms", simd_time);

ESP_LOGI(TAG, "Speedup: %.2f x", speedup);

ESP_LOGI(TAG, "Performance improvement: %.2f%%", (speedup - 1) * 100);

ESP_LOGI(TAG, "=========================================");

// Verify correctness

float error = fabsf(scalar_result - simd_result);

if (error < 1e-6) {

ESP_LOGI(TAG, "Verification succeeded, error: %.8f", error);

} else {

ESP_LOGE(TAG, "Verification failed, error: %.8f", error);

}

// Clean up

free(array_a);

free(array_b);

dsps_fft2r_deinit_fc32();

ESP_LOGI(TAG, "Test completed!");

}Explanation

CPU (Scalar) Version

- This version uses a standard C language

forloop to calculatea[i] * b[i]item by item and then sums them up. - It does not use any special instructions; it runs purely on the CPU core.

- This is the “CPU version” or “scalar version.”

float dot_product_scalar(const float* a, const float* b, int len) {

float sum = 0.0f;

for (int i = 0; i < len; i++) {

sum += a[i] * b[i];

}

return sum;

}SIMD (Vectorized) Version

- This version calls the

dsps_dotprod_f32()function from the esp-dsp library. - This function leverages the ESP32-S3’s vector SIMD instruction set to process the multiply-add operations of multiple floating-point numbers at once.

- Compared to the item-by-item loop, it can be significantly accelerated.

float dot_product_simd(const float* a, const float* b, int len) {

float result;

dsps_dotprod_f32(a, b, &result, len);

return result;

}Compile and Flash

After writing the code, you can use the ESP-IDF tools to build, flash, and monitor:

In the VS Code lower-left ESP-IDF toolbar:

- Click Build project

- Click Flash device

- Click Monitor device

After the program starts, you can view the output results in the ESP_Log:

I (285) SIMD_BENCHMARK: Starting SIMD performance test...

I (295) SIMD_BENCHMARK: ====== Performance Test Results ==========

I (305) SIMD_BENCHMARK: Data length: 1024, Iterations: 1000

I (315) SIMD_BENCHMARK: Scalar result: 256.328125

I (325) SIMD_BENCHMARK: SIMD result: 256.328125

I (335) SIMD_BENCHMARK: Scalar time: 1562.45 ms

I (345) SIMD_BENCHMARK: SIMD time: 391.18 ms

I (355) SIMD_BENCHMARK: Speedup ratio: 3.99 x

I (365) SIMD_BENCHMARK: Performance improvement: 299.00%

I (375) SIMD_BENCHMARK: =========================================

I (385) SIMD_BENCHMARK: Verification succeeded, error: 0.00000012

I (395) SIMD_BENCHMARK: Test completed!The ESP32-S3’s built-in SIMD instruction set can significantly reduce computation time for numerically intensive operations (like dot products), making it highly suitable for applications in Edge AI and digital signal processing.

Conclusion

Through this simple dot product example, we’ve demonstrated the immense potential of the ESP32-S3’s built-in SIMD vector instruction set for accelerating Edge AI computations. For applications that require machine learning inference on a microcontroller, such as speech recognition, image processing, or sensor data classification, leveraging SIMD can significantly reduce latency and boost overall system performance.

- The ESP32-S3’s SIMD vector instructions can significantly accelerate core AI/ML operations.

- Logs provide a clear and intuitive way to show the difference between SIMD and standard computation.

- Even without a dedicated NPU, the ESP32-S3 can still execute Edge AIoT models on a low-power MCU.

- It’s perfect for applications like gesture classification, wake word detection, or small convolutional neural networks.

With the ESP32-S3’s SIMD vector instructions and the ESP-DSP / ESP-DL libraries, achieving real-time Edge AI computation on an MCU is no longer just a dream.