YOLO11 Is Insane | The Fastest and Most Accurate AI Detection Model Yet!

YOLO11, the latest generation of real-time object detection models developed by Ultralytics, is igniting a new wave of revolution in the field of computer vision.

It not only inherits the exceptional speed that defines the You Only Look Once series but also delivers comprehensive advancements in detection accuracy, computational efficiency, and multi-task learning capabilities.

As a cornerstone of computer vision, object detection plays a critical role across key domains such as industrial automation, autonomous driving, and intelligent surveillance systems.

Among numerous solutions, the YOLO series has consistently stood out as the industry’s top choice—renowned for its outstanding inference speed and reliable detection performance.

Recently, Ultralytics officially released YOLO11, marking a new milestone in the evolution of real-time object detection technology.

This article delves into YOLO11’s core innovations, performance improvements, and breakthroughs in multi-task learning—offering a comprehensive look at this groundbreaking AI vision model.

Contents

What Is YOLO11?

YOLO11, developed by Ultralytics, represents the latest evolution of the You Only Look Once family and stands among the most advanced real-time object detection architectures to date.

The model continues YOLO’s fundamental philosophy — performing detection and classification in a single forward pass, allowing for ultra-fast and efficient visual inference.

Compared to its predecessors, YOLO11 introduces substantial improvements across multiple dimensions:

- A lighter and more efficient architecture, achieving even faster inference speeds.

- Enhanced feature fusion and attention mechanisms, improving accuracy for small objects and complex environments.

- Extended multi-task learning capabilities, supporting object detection, instance segmentation, pose estimation, and image classification within a unified framework.

- Multiple model scales (YOLO11n, s, m, l, x) optimized for diverse hardware and deployment scenarios.

In essence, YOLO11 redefines the balance between speed, accuracy, and versatility, making it not just a detector, but a comprehensive AI vision engine.

How YOLO11 Works: The Core Mechanism

The core concept behind YOLO (You Only Look Once) is simple yet revolutionary:

An image is processed only once to detect all objects within it.

Unlike traditional two-stage detectors (e.g., R-CNN, Faster R-CNN) that first generate region proposals and then classify them, YOLO adopts an end-to-end, single-stage approach — dramatically increasing inference speed.

The detection process involves three main steps:

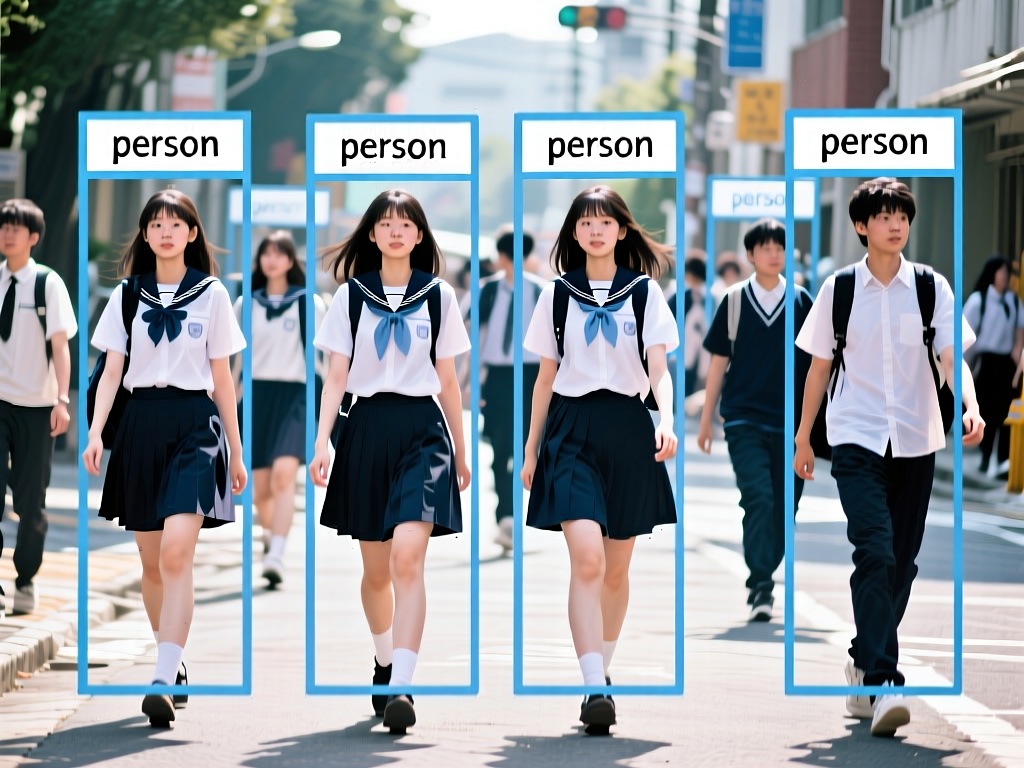

- Grid Division

The input image is divided into a grid. Each cell in the grid is responsible for detecting objects whose centers fall within it. - Bounding Box & Class Prediction

Each grid cell predicts multiple bounding boxes, along with object confidence scores and class probabilities. - Non-Maximum Suppression (NMS)

Overlapping or low-confidence boxes are filtered out, leaving only the most accurate detections.

This single forward pass design allows YOLO to achieve real-time object detection with minimal computational overhead — striking the perfect balance between speed and accuracy, ideal for real-world and edge AI applications.

What Can YOLO Do?

YOLO isn’t just an object detector — it’s a multi-purpose computer vision engine capable of handling diverse visual tasks:

- Real-Time Object Detection: Identify objects like people, vehicles, animals, or industrial components in milliseconds.

- Instance Segmentation: Detect and outline object boundaries for tasks like defect detection, medical imaging, or AR/VR.

- Pose Estimation: Recognize human body keypoints and movements for fitness, behavior analysis, or sports analytics.

- Classification: Categorize images or objects, useful for retail, visual search, and quality control.

- Multi-Task Learning: Perform multiple vision tasks simultaneously, improving efficiency and simplifying deployment at the edge.

Development Environment

Ultralytics provides an official Python package and CLI tool, making setup fast and simple.

System Requirements:

| Component | Recommended |

|---|---|

| OS | Windows / macOS / Linux |

| Python | ≥ 3.8 |

| GPU | NVIDIA GPU with CUDA 11+ (for acceleration) |

| Framework | PyTorch (auto-installed) |

Setting Up a Virtual Environment

Creating a virtual environment helps keep your workspace clean and avoids dependency conflicts:

python -m venv yoloenvActivate the environment:

# macOS / Linux

source yoloenv/bin/activate

# Windows

yoloenv\Scripts\activate

Once you see (yoloenv) in your terminal prompt, the environment is active.

Installing YOLO

Install the official Ultralytics package, which includes the YOLO model and CLI tools::

pip install ultralyticsVerify your installation:

yolo versionIf you see a version number like 8.3.x, the installation was successful — YOLO11 is included in this release branch.

Code

Run the following Python script to test your setup.

If it executes without errors and displays the result, your environment is ready to go.

# ===============================

# YOLO Complete Python Example

# ===============================

import os

from ultralytics import YOLO

# Set up project folder

project_dir = "/Users/kdm4/Desktop/yoloenv/main/outputs" # Customize the save folder

os.makedirs(project_dir, exist_ok=True) # Automatically create if it doesn't exist

# Load YOLO pre-trained model

# This is the nano version – lightweight and fast

model = YOLO("yolo11n.pt")

# Run inference on an image and save results

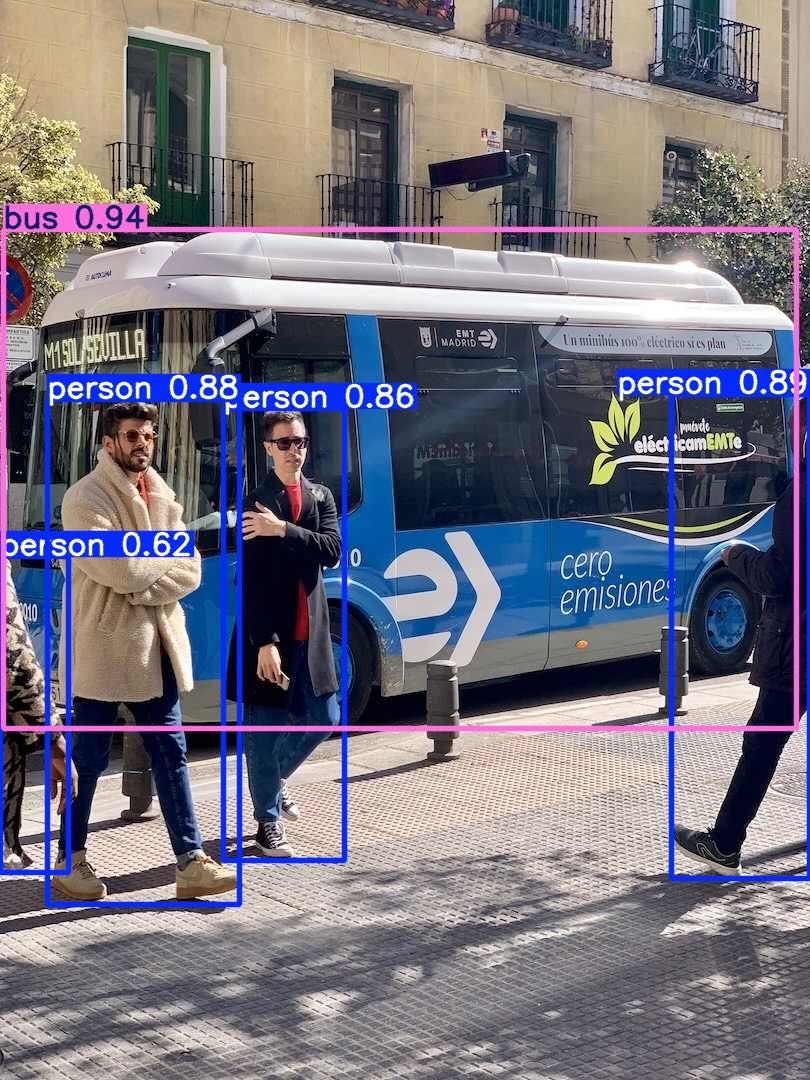

image_source = "https://ultralytics.com/images/bus.jpg"

results = model(

image_source,

save=True, # Automatically save the output

project=project_dir, # Specify the save location

name="demo", # Subfolder name

)

# Display inference results

results[0].show() # Opens a window showing detection boxes

# Print bounding box details

for i, box in enumerate(results[0].boxes):

cls_id = int(box.cls) # Class index

conf = float(box.conf) # Confidence score

xyxy = box.xyxy[0].tolist() # Bounding box coordinates [x1, y1, x2, y2]

print(f"Object {i+1}: Class {cls_id}, Confidence {conf:.2f}, BBox {xyxy}")

# Show save directory

print(f"Results saved to: {results[0].save_dir}")Code Explanation

Importing Modules:

import os

from ultralytics import YOLOosmanages file paths;ultralytics.YOLOloads and runs the model.

Setting the Project Directory:

project_dir = "/Users/kdm4/Desktop/yoloenv/main/outputs"

os.makedirs(project_dir, exist_ok=True)- Creates an output folder automatically for saving inference results.

Loading the Model:

model = YOLO("yolo11n.pt")YOLO("yolo11n.pt")loads the nano version — fast and lightweight.

Running Inference:

results = model(

"https://ultralytics.com/images/bus.jpg",

save=True,

project=project_dir,

name="demo",

)- Detects objects in the given image and saves results to your specified directory.

Displaying Results:

results[0].show()- Opens a preview window with bounding boxes and class labels.

Printing Box Data:

for i, box in enumerate(results[0].boxes):

cls_id = int(box.cls)

conf = float(box.conf)

xyxy = box.xyxy[0].tolist()

print(f"Object {i+1}: Class {cls_id}, Confidence {conf:.2f}, BBox {xyxy}")- Displays class IDs, confidence scores, and bounding box coordinates in the terminal.

Displaying the Save Path:

print(f"Results saved to: {results[0].save_dir}")- This is the folder path where the inference results are saved.

When you run it:

- A window pops up showing detections.

- The terminal lists object details.

- The output folder contains labeled images ready for analysis or sharing.

Conclusion

YOLO11 isn’t just another upgrade in the YOLO family — it’s a revolution in real-time computer vision.

Like lightning, it slices through the limits of visual computation with breathtaking speed, while its precision locks onto every detail like an eagle’s gaze. Whether in smart retail, traffic surveillance, or autonomous driving, it recognizes and interprets the world in milliseconds — delivering unmatched efficiency and reliability.

More impressively, this model doesn’t just see faster or see better — it sees more.

From object detection and semantic segmentation to pose estimation and image classification, a single model now handles it all, redefining the entire visual AI workflow.

For researchers, it’s a tool for discovery.

For developers, a bridge to rapid deployment.

For enterprises, a catalyst for intelligent transformation.

In this era of accelerated AI evolution, YOLO11 represents not just a technological leap, but a step toward making intelligent vision truly universal — enabling every device to see the world, and every application to understand it.