2026 YOLO Custom Model Tutorial | From Training to Inference

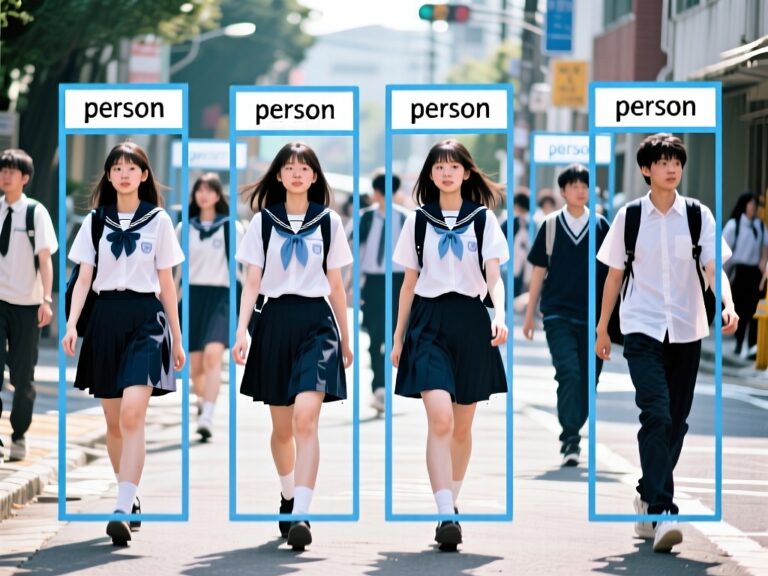

YOLO Custom Models are a game-changer for computer vision developers! Generic YOLO models often struggle in specialized scenarios, like misidentifying industrial parts, confusing pets with cushions, or completely failing to detect specific objects. Custom models, however, can be retrained on your own dataset to focus on the targets that matter, significantly improving accuracy and reliability. The latest YOLO version is not only faster in inference and quicker to train, but it can also run efficiently on edge devices.

This tutorial serves as your “avoidance guide + practical manual”: from data preparation and model training to deployment on PC, cloud, or edge devices. Complete with code, templates, and tips, it helps you quickly build a YOLO custom model, avoid common pitfalls, and achieve true flexibility—detect whatever you want, wherever you need it.

Contents

What is a YOLO Custom Model?

A YOLO Custom Model is a retrained version of YOLO’s real-time object detection framework using your own dataset. This allows the model to focus on specific scenarios, objects, or tasks. Compared to general pre-trained models (like COCO), custom models greatly enhance accuracy and stability in specialized applications.

In real-world use cases, generic models often fall short, for example:

- Industrial inspection: detect only specific parts or defects

- Smart surveillance: focus on faces, gestures, or particular behaviors

- Medical, agricultural, or retail applications: custom detection needs

With YOLO Custom Models, you train the network only on “important targets,” reducing unnecessary class interference while balancing speed and precision.

Core Workflow

The YOLO Custom Model workflow consists of four main stages:

- Data Preparation

- Collect images or videos from the target environment

- Annotate them in YOLO format (bounding boxes + class labels)

- Model Selection & Initialization

- Choose a suitable YOLO model version (e.g., YOLOv8n/s/m)

- Use official or community pre-trained weights as a starting point (transfer learning)

- Custom Training (Fine-tuning)

- Train the model on your dataset

- Adjust training parameters (epochs, batch size, image size)

- Inference & Deployment

- Run predictions on images, videos, or live streams using trained weights

- Deploy locally, on edge devices, or in the cloud as needed

This workflow is highly flexible and ideal for rapid iteration and deployment.

Development Environment

Ultralytics provides official Python packages and CLI tools for YOLO, making installation and environment setup simple.

System Requirements:

| Component | Recommended |

|---|---|

| OS | Windows / macOS / Linux |

| Python | ≥ 3.8 |

| GPU | NVIDIA GPU with CUDA 11+ (for acceleration) |

| Framework | PyTorch (auto-installed) |

Setting Up a Virtual Environment

Creating a virtual environment helps keep your workspace clean and avoids dependency conflicts:

python -m venv yoloenvActivate the environment:

# macOS / Linux

source yoloenv/bin/activate

# Windows

yoloenv\Scripts\activate

Once you see (yoloenv) in your terminal prompt, the environment is active.

Installing YOLO

Install the official Ultralytics package, which includes the YOLO model and CLI tools::

pip install ultralyticsVerify your installation:

yolo versionIf you see a version number like 8.3.x, the installation was successful — YOLO11 is included in this release branch.

Dataset Preparation

coco128 is a small demo dataset officially provided by YOLO, designed for quickly verifying that your training pipeline and configuration are set up correctly.

Download and extract:

mkdir -p datasets

cd datasets

curl -L -o coco128.zip https://github.com/ultralytics/assets/releases/download/v0.0.0/coco128.zip

unzip coco128.zipVerify Dataset Structure:

datasets/coco128/

├── images/train2017/

├── labels/train2017/

└── coco128.yamlGenerate coco128.yaml

The coco128.yaml file is the most critical dataset configuration file when training a YOLO custom model.

It serves one purpose only: telling YOLO where your dataset is located and which classes to train.

It is not an image file and not an annotation file — it is the dataset specification.

During training, YOLO performs the following steps:

- Reads

coco128.yaml - Locates the dataset root via

path - Loads images according to the

train/valpaths - Builds the classification head based on

names(class definitions)

Create the File

Create the dataset configuration file with:

nano datasets/coco128/coco128.yamlThen paste the following content into the file:

# COCO128 dataset (128 images from COCO train2017)

path: datasets/coco128

train: images/train2017

val: images/train2017 # COCO128 has no separate val set, using train instead

names:

0: person

1: bicycle

2: car

3: motorcycle

4: airplane

5: bus

6: train

7: truck

8: boat

9: traffic light

10: fire hydrant

11: stop sign

12: parking meter

13: bench

14: bird

15: cat

16: dog

17: horse

18: sheep

19: cow

20: elephant

21: bear

22: zebra

23: giraffe

24: backpack

25: umbrella

26: handbag

27: tie

28: suitcase

29: frisbee

30: skis

31: snowboard

32: sports ball

33: kite

34: baseball bat

35: baseball glove

36: skateboard

37: surfboard

38: tennis racket

39: bottle

40: wine glass

41: cup

42: fork

43: knife

44: spoon

45: bowl

46: banana

47: apple

48: sandwich

49: orange

50: broccoli

51: carrot

52: hot dog

53: pizza

54: donut

55: cake

56: chair

57: couch

58: potted plant

59: bed

60: dining table

61: toilet

62: tv

63: laptop

64: mouse

65: remote

66: keyboard

67: cell phone

68: microwave

69: oven

70: toaster

71: sink

72: refrigerator

73: book

74: clock

75: vase

76: scissors

77: teddy bear

78: hair dryer

79: toothbrush

Saving the File :

When editing files (e.g., with nano):

Ctrl + O → Enter → Ctrl + XProject Structure

Full Project Structure:

yolo_custom_demo/

├── datasets/

│ └── coco128/

│ ├── images/

│ │ └── train2017/

│ ├── labels/

│ │ └── train2017/

│ └── coco128.yaml

├── yoloenv/ # Python virtual environment

├── runs/ # Training and inference outputs

├── yolov8n.pt # Official pretrained YOLO modelTraining Command

Before running the training command, make sure you are in the project root directory.

yolo detect train \

model=yolov8n.pt \

data=datasets/coco128/coco128.yaml \

epochs=3 \

imgsz=640yolo detect train

Starts YOLO in object detection training mode.model=yolov8n.pt

Specifies the official pretrained YOLOv8n model as the training starting point.data=datasets/coco128/coco128.yaml

Points to the dataset configuration file, which defines the training/validation paths and class names.epochs=3

Sets the total number of training epochs. Adjust this value based on dataset size and training goals.imgsz=640

Sets the input image size to 640×640. YOLO will automatically resize images during training.

Training Process

During training, YOLO automatically computes loss values, precision/recall, mAP, and other evaluation metrics.

All training artifacts and logs are saved under:

runs/detect/train/YOLO reads the training and validation image paths and corresponding labels directly from:

datasets/coco128/coco128.yamlOutput

Ultralytics 8.3.241 🚀 Python-3.9.6 torch-2.8.0 CPU (Apple M4 Pro)

Model summary (fused): 72 layers, 3,151,904 parameters, 0 gradients, 8.7 GFLOPs- 72 layers

The model consists of 72 neural network layers. - 3,151,904 parameters

Approximately 3.15 million trainable parameters. - 0 gradients

Indicates the model is currently in evaluation mode (no weight updates at this stage). - 8.7 GFLOPs

Each forward pass requires approximately 8.7 billion floating-point operations.

Conclusion

YOLO Custom Model training transforms generic computer vision into a solution tailored for your specific scenario. With proper dataset structure, YAML configuration, and standardized commands, you can complete the full loop of data preparation, training, and inference locally. Even small datasets can quickly validate whether a model “learns and works,” making it ideal for industrial inspection, custom object recognition, or internal PoC projects.

The real value of a custom model lies in control and reproducibility, not peak metrics. Using a local virtual environment, clear project structure, and traceable outputs, you can continually refine dataset quality, training parameters, and model versions, gradually adapting the model to real-world needs. YOLO Custom Model is a sustainable engineering workflow, enabling reliable deployment of computer vision in practical scenarios.